[ad_1]

I blocked two of our rating pages utilizing robots.txt. We misplaced a place right here or there and the entire featured snippets for the pages. I anticipated much more impression, however the world didn’t finish.

Warning

I don’t advocate doing this, and it’s totally potential that your outcomes could also be completely different from ours.

I used to be attempting to see the impression on rankings and site visitors that the removing of content material would have. My principle was that if we blocked the pages from being crawled, Google must depend on the hyperlink alerts alone to rank the content material.

Nevertheless, I don’t suppose what I noticed was truly the impression of eradicating the content material. Possibly it’s, however I can’t say that with 100% certainty, because the impression feels too small. I’ll be working one other take a look at to verify this. My new plan is to delete the content material from the web page and see what occurs.

My working principle is that Google should still be utilizing the content material it used to see on the web page to rank it. Google Search Advocate John Mueller has confirmed this habits within the previous.

To date, the take a look at has been working for practically 5 months. At this level, it doesn’t appear to be Google will cease rating the web page. I think, after some time, it’ll doubtless cease trusting that the content material that was on the web page continues to be there, however I haven’t seen proof of that taking place.

Maintain studying to see the take a look at setup and impression. The principle takeaway is that by chance blocking pages (that Google already ranks) from being crawled utilizing robots.txt most likely isn’t going to have a lot impression in your rankings, and they’re going to doubtless nonetheless present within the search outcomes.

I selected the identical pages as used within the “impact of link” study, aside from the article on SEO pricing as a result of Joshua Hardwick had simply up to date it. I had seen the impression of eradicating the hyperlinks to those articles and wished to check the impression of eradicating the content material. As I stated within the intro, I’m undecided that’s truly what occurred.

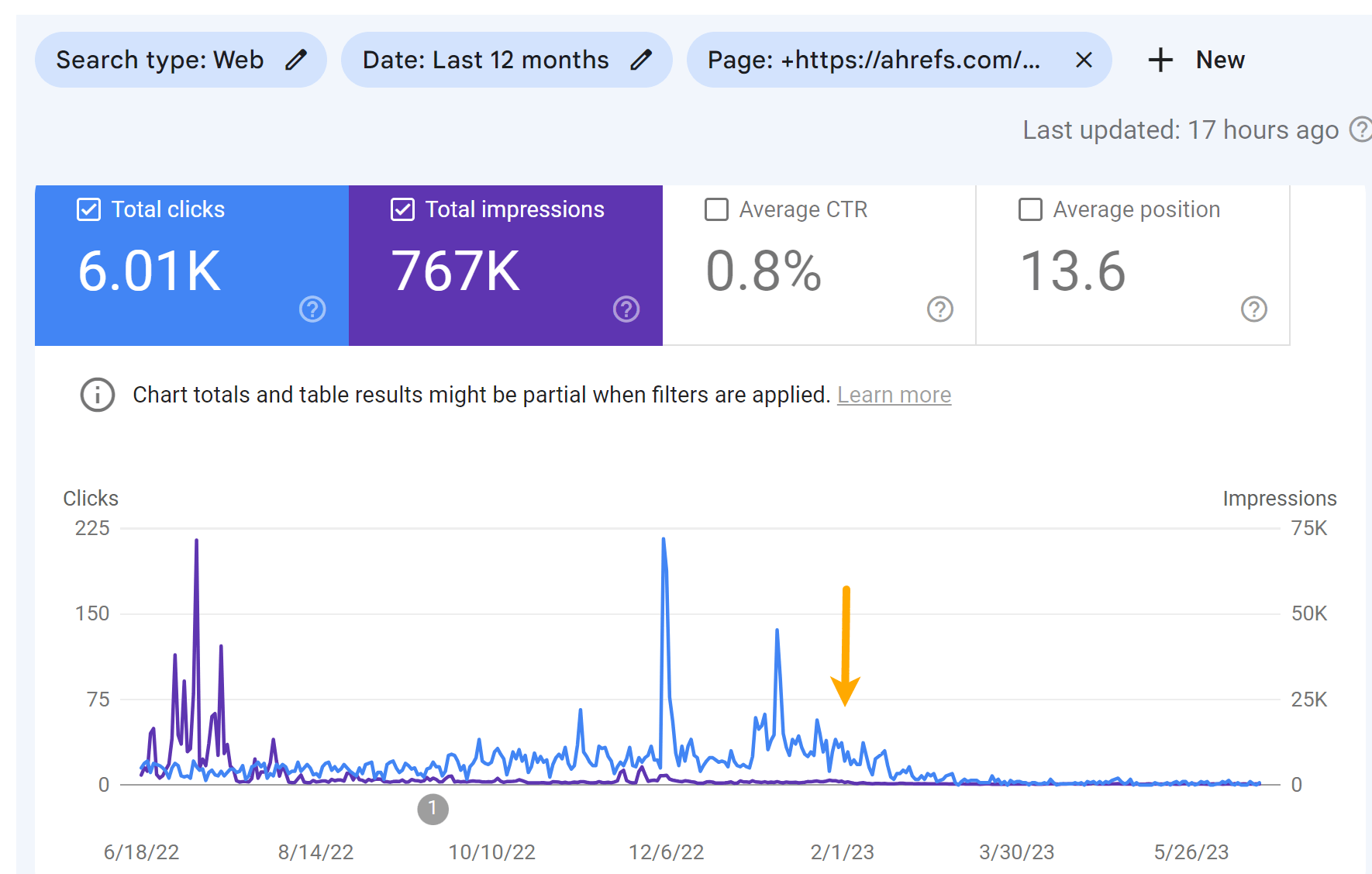

I blocked these two pages on January 30, 2023:

These strains had been added to our robots.txt file:

Disallow: /weblog/top-bing-searches/Disallow: /weblog/top-youtube-searches/

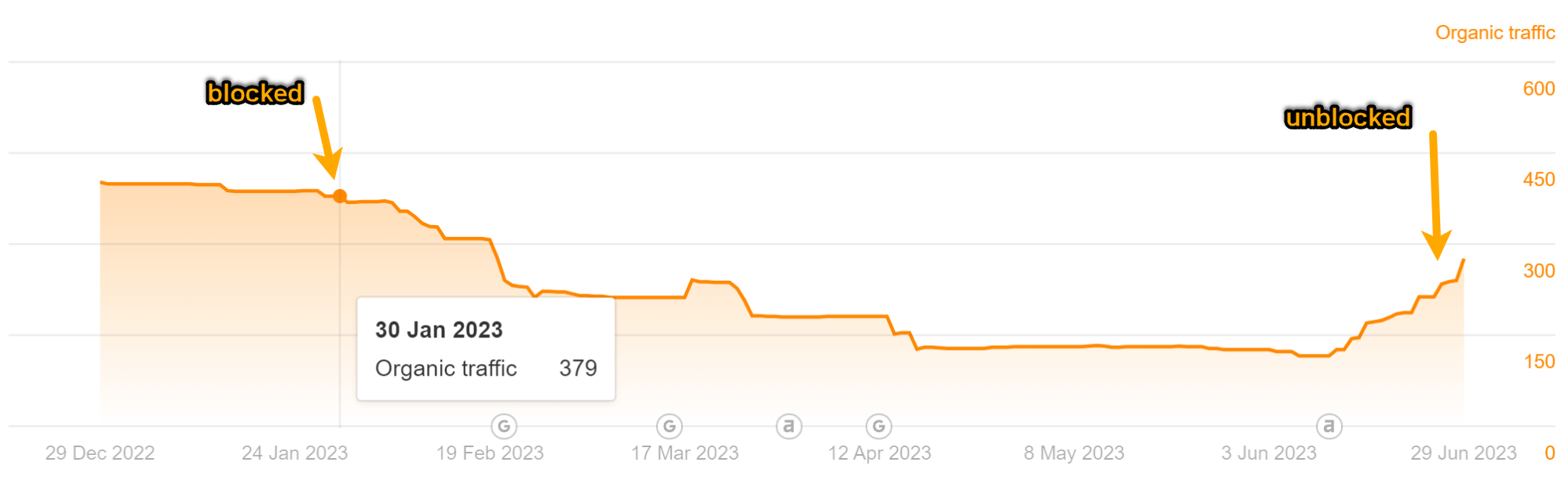

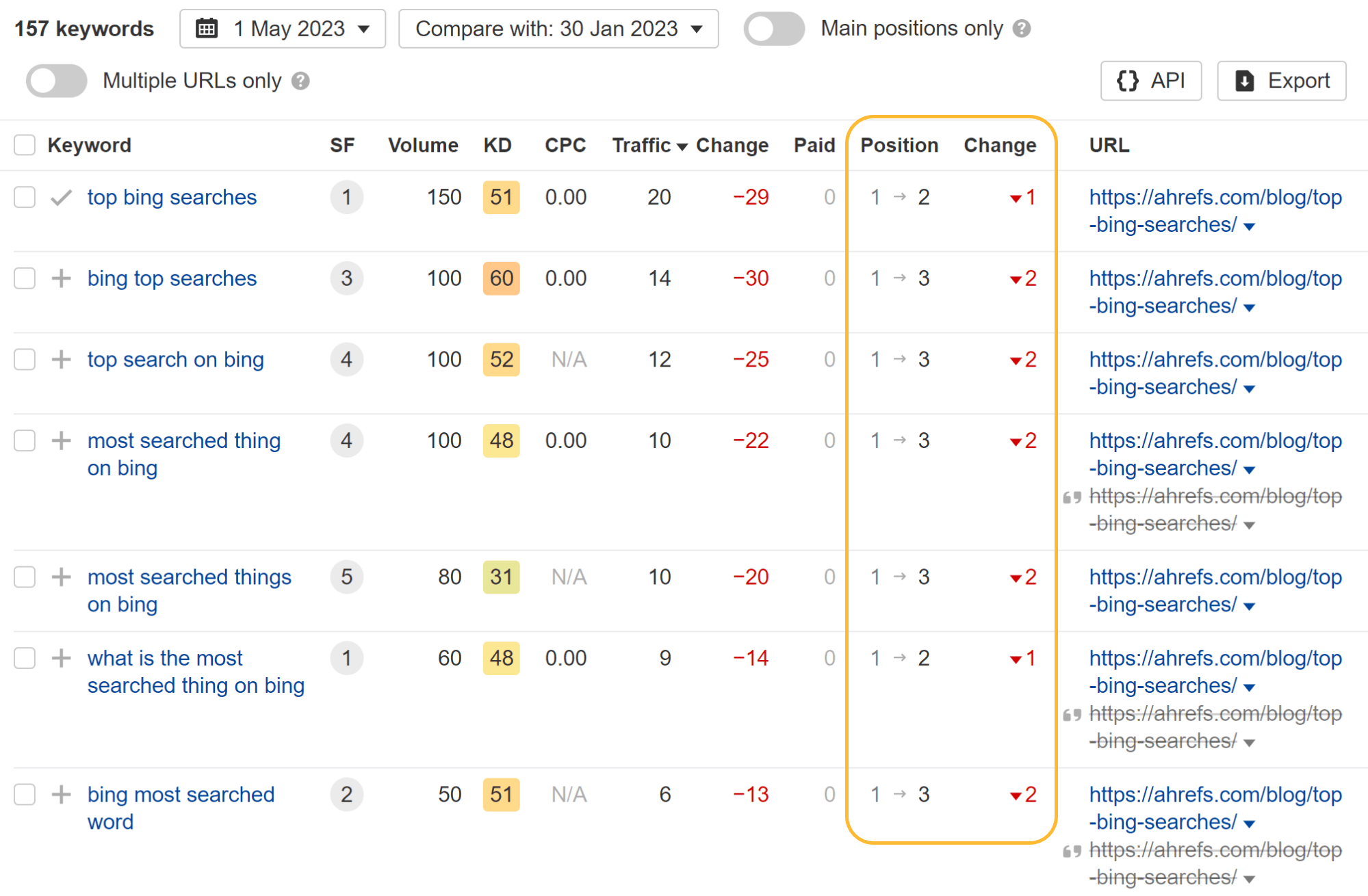

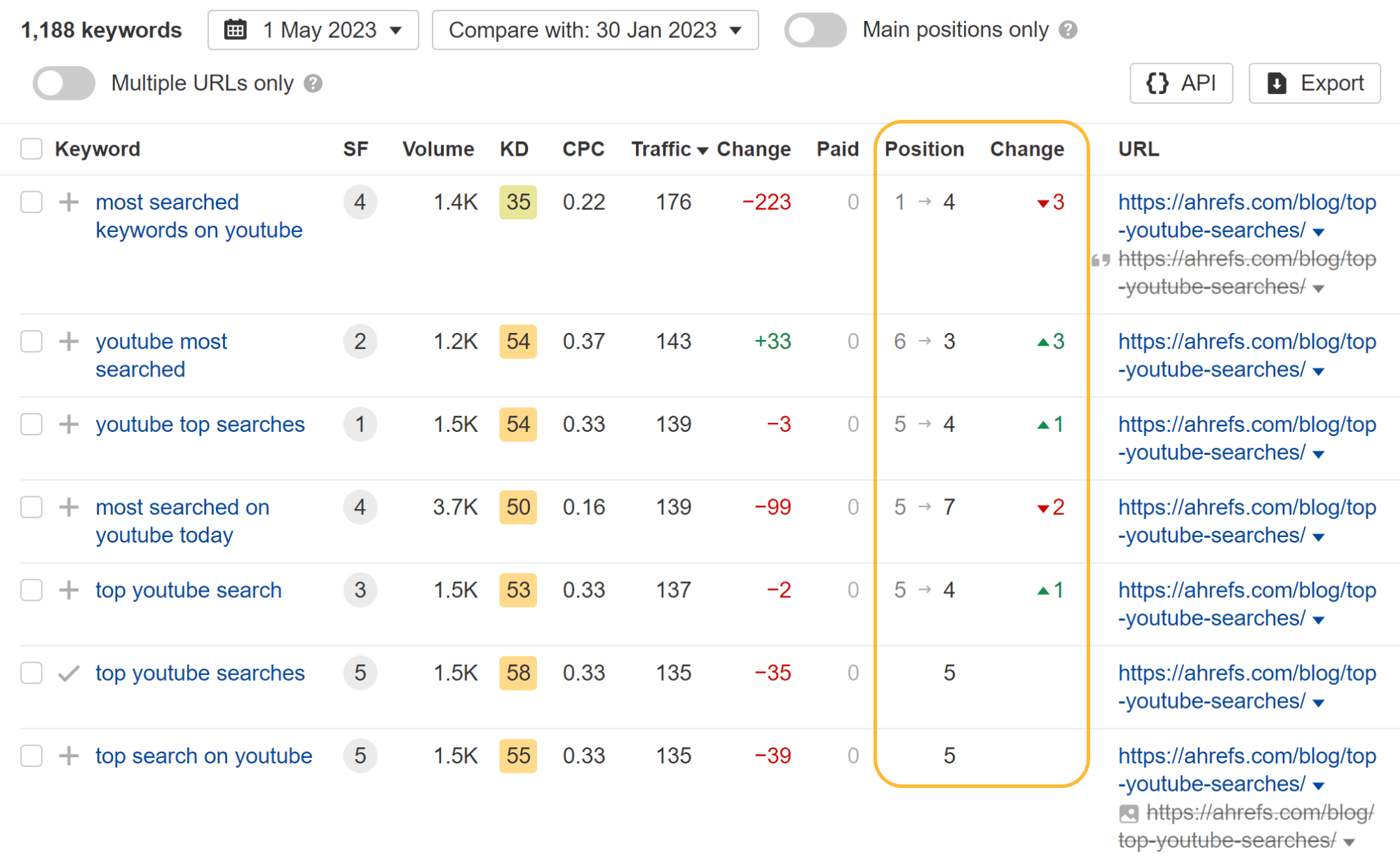

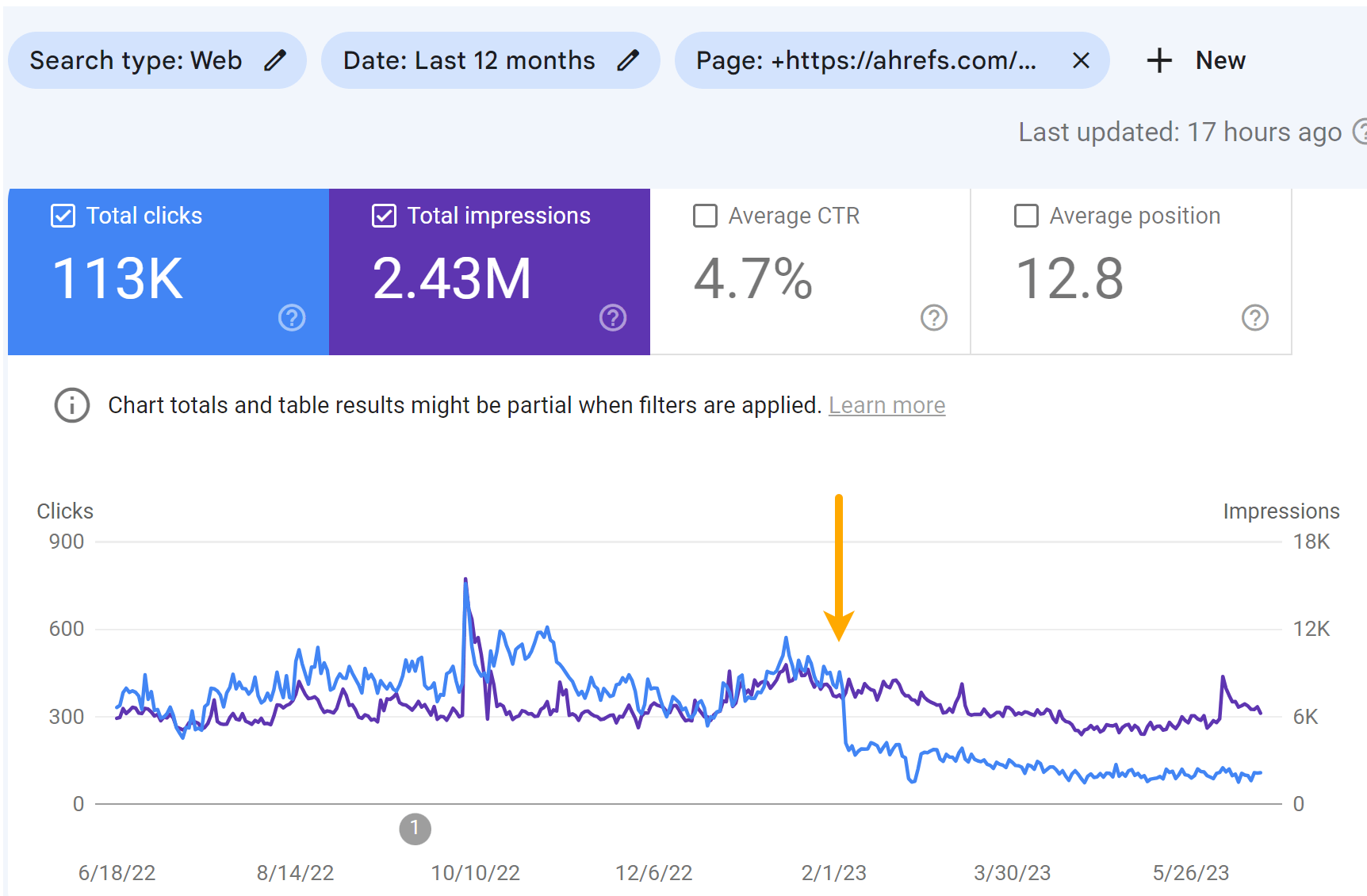

As you may see within the charts under, each pages misplaced some site visitors. However it didn’t end in a lot change to our site visitors estimate like I used to be anticipating.

Wanting on the particular person key phrases, you may see that some key phrases misplaced a place or two and others truly gained rating positions whereas the web page was blocked from crawling.

Essentially the most fascinating factor I observed is that they misplaced all featured snippets. I assume that having the pages blocked from crawling made them ineligible for featured snippets. After I later eliminated the block, the article on Bing searches rapidly regained some snippets.

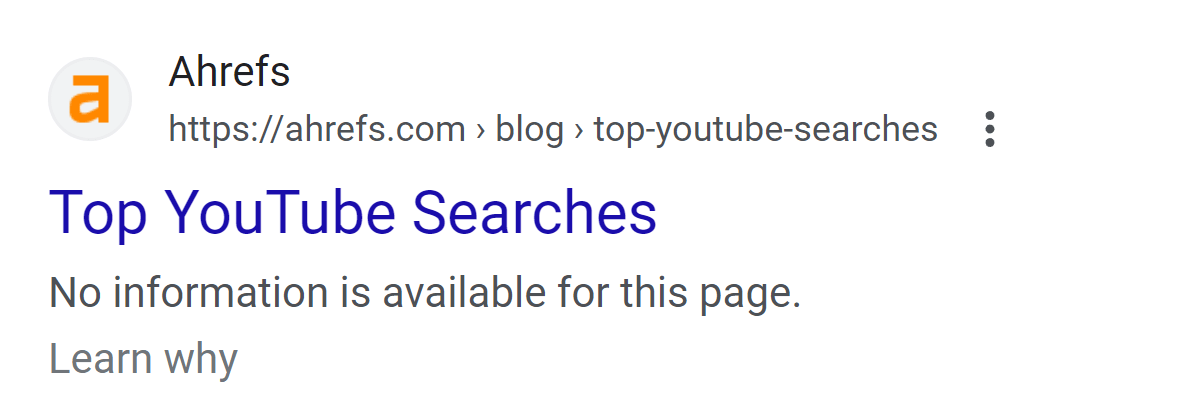

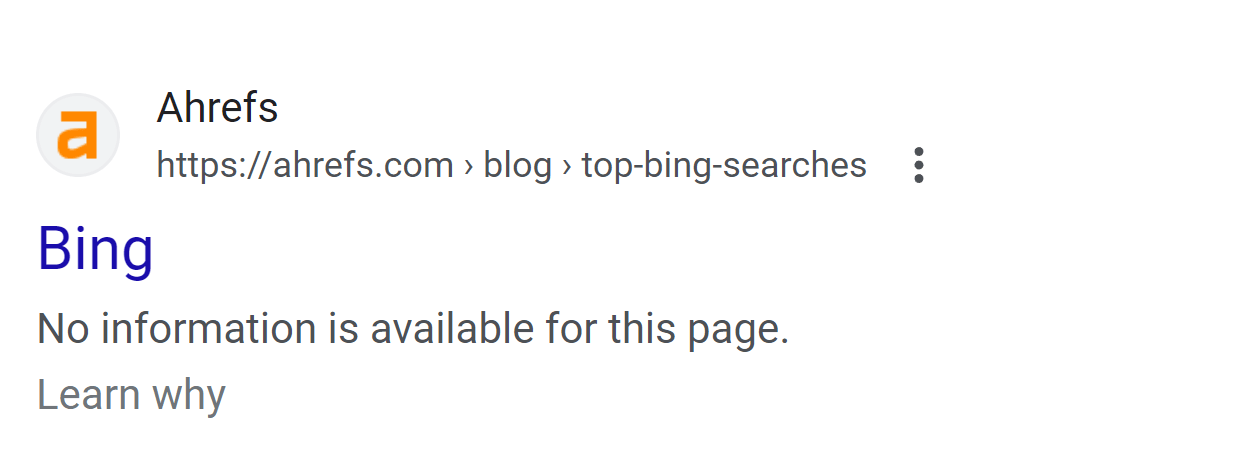

Essentially the most noticeable impression to the pages is on the SERP. The pages misplaced their customized titles and displayed a message saying that no data was obtainable as an alternative of the meta description.

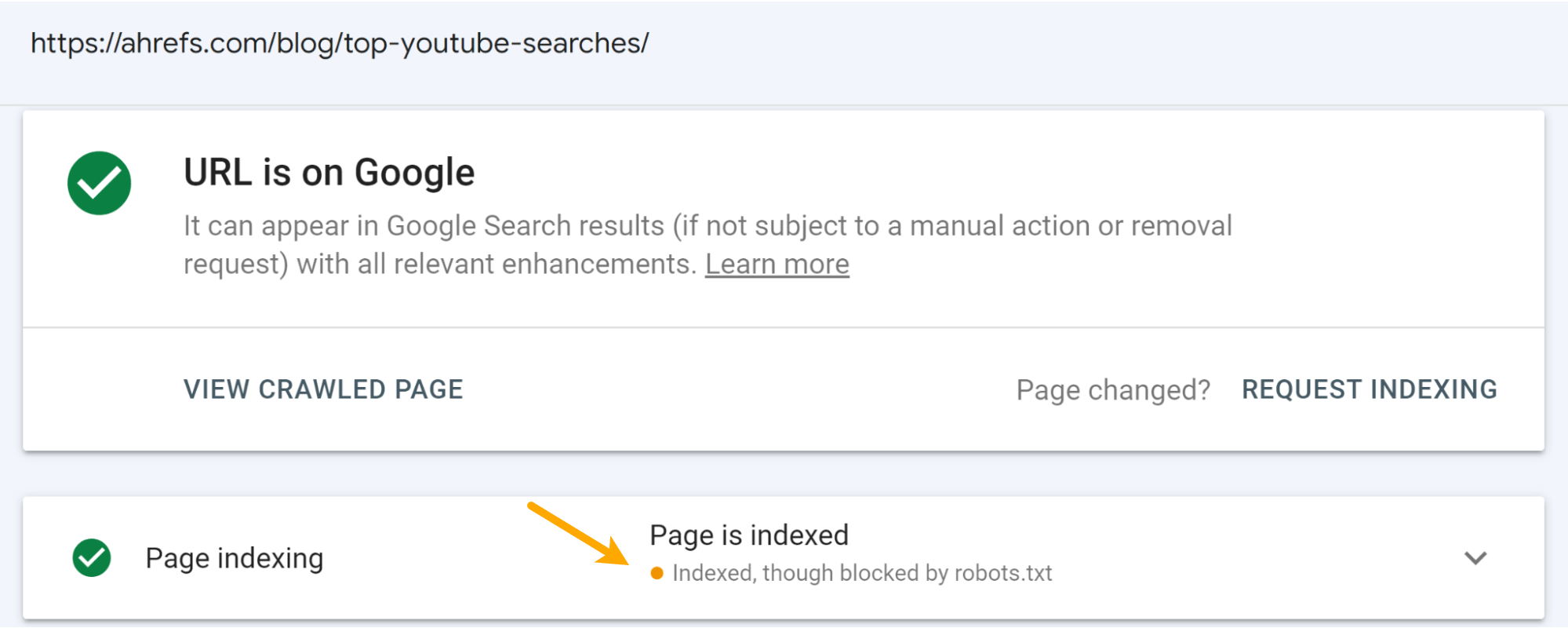

This was anticipated. It occurs when a web page is blocked by robots.txt. Moreover, you’ll see the “Indexed, though blocked by robots.txt” standing in Google Search Console for those who examine the URL.

I consider that the message on the SERPs damage the clicks to the pages greater than the rating drops. You may see some drop within the impressions, however a bigger drop within the variety of clicks for the articles.

Visitors for the “High YouTube Searches” article:

Visitors for the “High Bing Searches” article:

Closing ideas

I don’t suppose any of you’ll be stunned by my commentary on this. Don’t block pages you need listed. It hurts. Not as dangerous as you would possibly suppose it does—but it surely nonetheless hurts.

[ad_2]